In the quest for efficiency and innovation, organizations often rush to automate their processes and do it without proper governance. Doing so, many businesses are at risk of creating a “Frankenstein” system – unstable, unpredictable, and ultimately destructive to organizational value.

This monster is born from good intentions and poor foresight, namely, through endless customization, forgotten add-ons, and chaotic software logistics.

The consequences are real: operational inefficiency, technical debt, compliance gaps, and even ethical missteps. To tame this beast, businesses need governance that lives within the architecture, a fundamental part of the system rather than a checklist of policies.

A Recipe for Disaster

As Karyna Mihalevich, Chief Product Officer and AI Ambassador at CLARITY, puts it, “Frankenstein systems arise from governance failures, creating tangible risks that impact the entire business.”

AI systems have inherent limitations. What turns those limitations into enterprise risk is the absence of clear governance, architectural discipline, and operational controls. Without them, known AI weaknesses are left unmanaged and begin to affect decision-making, compliance, and trust at scale.

Common risk areas include:

- AI Hallucinations: Generative AI can produce seemingly plausible but entirely false information, leading to misguided decisions.

- Data Security & Privacy Risks: The threat of unauthorized access, data breaches, and compliance violations stands for a significant danger without stringent controls.

- Copyright & IP Uncertainties: The legal landscape surrounding AI-generated content stays murky, posing risks for organizations using such technologies.

- AI Bias: Significant racial and gender biases have been found in AI outputs, calling into question the fairness of automated decisions.

- Over-reliance on AI: Excessive dependence on automation can erode human insight, critical thinking, and creativity. Teams may lose the ability to challenge assumptions or innovate, leaving the organization vulnerable to unexpected disruptions and strategic missteps.

Why Governance Matters

Automation governance must be engineered into the system itself. Policies on paper are not enough; governance must shape how processes, systems, and data flows are designed and used. Gartner stresses multi-level structures – enterprise committees for strategy, operational governance per application, and AI TRiSM (Trust, Risk, and Security Management) for lifecycle controls – to bridge the policy-to-practice gap.

In SAP, which supports mission-critical functions such as finance, supply chain, and HR, this discipline via TRiSM is what keeps AI-driven decisions right, secure, auditable, and aligned with regulatory requirements like the EU AI Act.

The decision to automate mission-critical processes without governance is essentially a choice to prioritize short-term velocity over long-term viability. That trade-off is how Frankenstein systems appear: fragile landscapes with high operating costs, unpredictable behavior, and growing technical and compliance risk.

To avoid this outcome, governance must be translated into concrete, enforceable mechanisms. In practice, that means establishing a clear foundation across data, models, risk, and accountability.

Key components of effective governance:

- Data Governance: Define who can access data, maintain data quality, and ensure personal data is handled appropriately.

- Model Management: Set up protocols for training, testing, and approving AI models prior to deployment.

- Risk Management: Identify potential pitfalls in automation, develop backup plans, and monitor errors or biases.

- Roles & Responsibilities: Clarify ownership and monitoring responsibilities for AI solutions.

- Audit Trails: Keep comprehensive logs of AI actions and the rationale behind decisions to ease accountability.

“Alone, these components achieve little,” says Karyna. “Applied together, consistently and across the organization, they create a system that keeps AI and automation reliable, secure, and accountable.”

The Solution: Institutionalizing Governance through a Center of Excellence

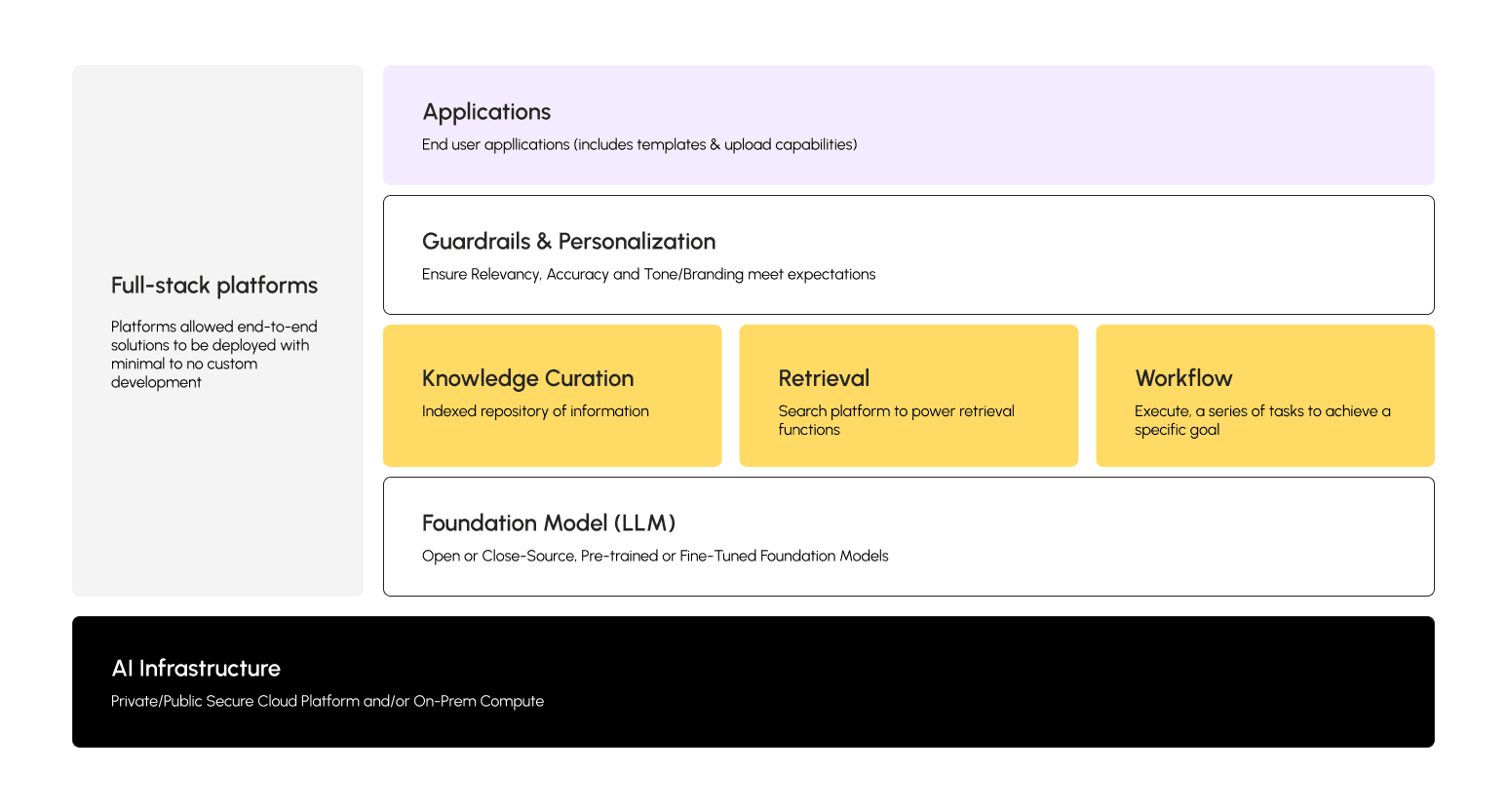

Central to this approach is the Automation Center of Excellence (CoE). It provides a structured, repeatable framework for managing AI and automation across the enterprise, ensuring that initiatives are safe, compliant, and aligned with business goals.

At its core, the CoE is a bridge between strategy and execution. It defines how AI initiatives are launched, monitored, and improved, balancing innovation with risk management. By consolidating expertise, policies, and best practices in a single hub, it ensures that AI can become a coherent, scalable engine for value creation.

This framework is what the upcoming diagram will illustrate: how the CoE integrates enterprise strategy, operational governance, and domain-level execution.

But Won’t AI Center of Excellence Just Add Bureaucracy?

Some argue that setting up a CoE introduces extra layers and slows decision-making. The reality is the opposite. Absent a centralized governance framework, AI and automation are managed case by case. Hallucinations are fixed after the fact, bias reviews are repeated across teams, and compliance becomes an ongoing recovery effort rather than a controlled process.

A properly structured CoE flips this script. Strategy is defined once at the enterprise level, operational controls monitor AI lifecycles automatically, and domain teams execute without reinventing the wheel.

In SAP environments, this can accelerate critical processes like Quote-to-Cash rather than slow them down, turning governance from a perceived drag into a speed lever. Without it, organizations are reactive, buried in the Frankenstein-style chaos their own systems have produced.

The Role of the Automation CoE

An AI Center of Excellence serves as the cornerstone of successful enterprise AI adoption by bridging governance, innovation, and execution. It ensures that AI initiatives are not just launched but sustained responsibly across the enterprise. It provides essential services including:

- Expert Guidance: Offering insights into best practices and industry standards.

- Policy Development: Crafting policies that govern AI use by ethical standards.

- Risk Assessment: Identifying potential risks and devising mitigation strategies.

- Training: Empowering teams with the knowledge to navigate AI responsibly.

- Cross-Functional Collaboration: Ensuring that AI initiatives are aligned with organizational goals across all departments.

Beyond these functions, the CoE also acts as a cultural anchor. It is where AI literacy is nurtured, where teams learn to balance ambition with caution, and where emerging challenges (novel bias patterns or unexpected integration issues) are surfaced before they spiral into enterprise-wide problems.

Defining Your Center of Excellence’s Operating Model

To maximize the effectiveness of the CoE, organizations must carefully design its structure and operational processes. Karyna highlights, “A CoE is only as effective as the system around it. Without clearly defined processes and accountability, even the best-intentioned team ends up firefighting instead of innovating.”

That effectiveness depends on several core elements of the operating model, including:

- Process & Structure: Set up clear procedures governing CoE operations, including reporting requirements to ensure transparency.

- Communication & Collaboration: Develop communication channels that ease interaction between the CoE and various stakeholders, including business units, IT, and legal departments.

- Accountability & Oversight: Define metrics for measuring CoE performance and show mechanisms for accountability to leadership and the broader organization.

Operational design also shapes how the CoE interacts with the wider organization. By embedding decision-making workflows, approval gates, and knowledge-sharing loops directly into daily operations, the CoE ensures that AI governance is lived and experienced by the teams doing the work.

The Bottom Line

Ultimately, allowing intelligent automation to grow without a true governance framework is a deliberate avoidance of responsibility. It is the precise process of building Frankenstein’s monster: assembling powerful parts without a plan for the living, thinking, operational whole. The result, more often than not, is a disappointment rather than a breakthrough.

Organizations must embed governance into the very architecture of their systems, and this is the non-negotiable foundation. It transforms automation from a tactical tool into a strategic asset, ensuring that the pursuit of efficiency today does not compromise the integrity and agility of the entire organization tomorrow.